I used to work in the manufacturing industry. Some processes were so complex that even a very experienced and competent engineer would not necessarily know how to identify the best settings for the manufacturing equipment.

You could make a guess using a general idea of what should be done regarding the optimal settings, but that was not sufficient. You need very precise indications of the correct process parameters, considering the specificities of the manufacturing equipment.

Or you could guess the best settings, assess the results, and then try to further improve the settings by modifying one factor at a time. But this could be a lengthy process, and you still might not find the optimal settings.

Even if you have the optimal settings for current conditions, to ensure that the plant remains at the leading edge, new technologies will need to be adopted -- introducing unknown situations and new problems. Ultimately, you need a comprehensive model that helps you understand, very precisely, how the system works. You obviously cannot get that by adjusting one factor at a time and experimenting in a disorganized way.

Engineers need a tool that can help them build models in a very practical, cost-effective, and flexible way, so that they can be sure that whenever a new product needs to be manufactured the right process settings are quickly identified. Design of Experiments (DOE) is the ideal tool for that.

Choosing the Right Design

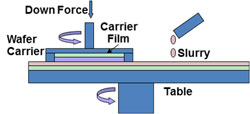

Polishing processes in the semiconductor industry are one example of a very complex and critical manufacturing process. The objective is to produce wafers that are as flat as possible in order to maintain high yield rates. High first-pass yield rates are absolutely essential to ensure that a plant is cost-effective, and that the customer is satisfied with product quality.

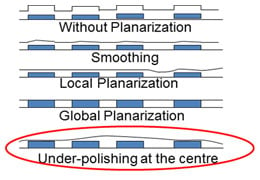

I was involved in a DOE in the polishing sector of a microelectronics facility. "Liner" residues after wafer polishing (under-polishing at the centre) made the yield rate and consistency for a particular material unsatisfactory. The degree of uniformity had to be improved since inadequate polishing/planarization impacted subsequent manufacturing processes.

I was involved in a DOE in the polishing sector of a microelectronics facility. "Liner" residues after wafer polishing (under-polishing at the centre) made the yield rate and consistency for a particular material unsatisfactory. The degree of uniformity had to be improved since inadequate polishing/planarization impacted subsequent manufacturing processes.

Six process parameters were considered. These parameters will be familiar to polishing engineers and they are easy to adjust (Down Force, Back Force, Carrier Velocity and Table Velocity). Other parameters (Type of Pad, Oscillations) were more innovative, but they did not necessarily involve a significant increase in the process costs.

The main objective was achieving a higher degree of uniformity, but it was also important to maintain a high level of productivity.

Our first step was to identify the parameter settings to test. To remain realistic, they could not be set too far apart, but to easily identify if a parameter had a real effect they could not be set too close together, either. For each parameter we selected two levels, making our experiment a two-level DOE. This enabled us to reduce the amount of tests that needed to be performed, and we assumed that the effects within our levels were likely to be mostly linear.

Next we needed to consider the experimental design and the number of tests we would need to perform. This is very important because the amount of time available to do the experiments is often very limited, and also because we want to minimize the number of parts that are manufactured during the tests, since those parts cannot be sold to customers.

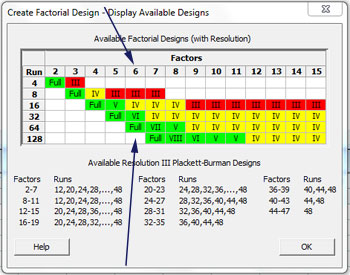

The table below is very helpful in choosing the right design. It is part of the Factorial DOE menu in Minitab (Go to > Stat > DOE > Factorial design > Create a factorial design >. In the dialog box that is displayed, choose Display available designs.)

With six parameters, we could go for a 64 runs full design (26), in the table, but that would be too expensive. We could choose the half fraction (26-1) design, but 32 runs is still too expensive. So we chose a 16-run, quarter-fraction design (26-2).

The green zone, in the table above, is the safest choice, but this is an expensive option, so our 16-run design is the best compromise in terms of cost and risk.

The 8 runs design (26-3) would have been even cheaper, but the red color indicates a higher degree of risk: with just 8 runs, some main factor effects are confounded with two-factor interactions making the analysis more difficult and riskier. The 16-run design is safer (as the yellow color suggests), and because main effects are un-confounded with other main effects, we can estimate their effect without any risk.

Two-factor interaction effects in this design are sometimes confounded with other two-factor interactions. But if such an interaction effect is significant, then we would expect the interactions in which statistically significant main factors are involved to be more likely to be significant than the other confounded interactions that do not involve statistically significant main factors. This is called the ‘heredity’ principle. This is a very helpful principle to identify the significant interaction when it is ‘confounded’ with other two-factor interactions.

I'll explain more about this DOE in my next post!